What My 1997 NASA Internship Taught Me About AI's Real Timeline

In the summer of 1997, I was a premed student at NYU with a secret: I was way more interested in computers than cadavers. So when I landed a research internship at NASA's biology labs at Kennedy Space Center in Cape Canaveral, Florida, I told myself it was the perfect compromise. Computers AND biology. Science AND technology. Mom would be proud.

I was twenty years old, riding a Greyhound-style bus from the intern housing to the Space Center every morning, wearing my NASA badge like it was a backstage pass to the future. Which, in a way, it was. I just didn't know it yet.

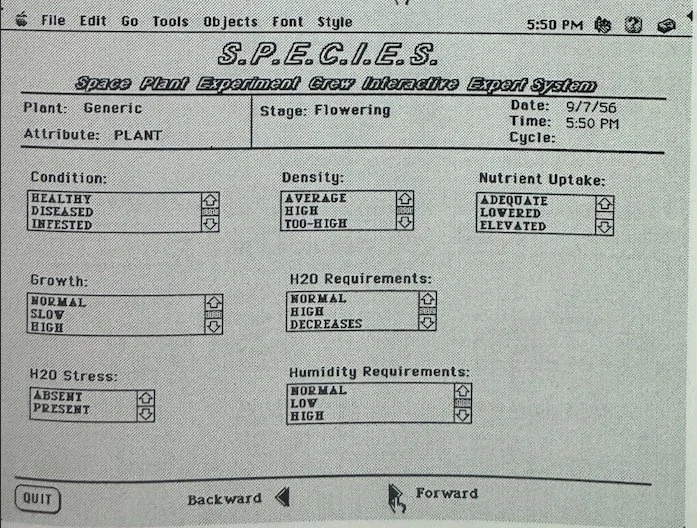

That summer, I worked on projects at the intersection of computational biology and what we loosely called "artificial intelligence," though the term meant something radically different in 1997 than it does today. We weren't building large language models. We weren't training neural networks on terabytes of data. We were writing BASIC and early Python scripts on machines with processing power that your Apple Watch would now laugh at, trying to get computers to recognize patterns in biological datasets.

It was painstaking, slow, and often frustrating work. But it planted a seed in my brain that wouldn't fully sprout for almost three decades: the idea that computers could eventually see patterns that humans couldn't. That artificial intelligence, in its fullest expression, would one day transform not just how we process information, but how we understand life itself.

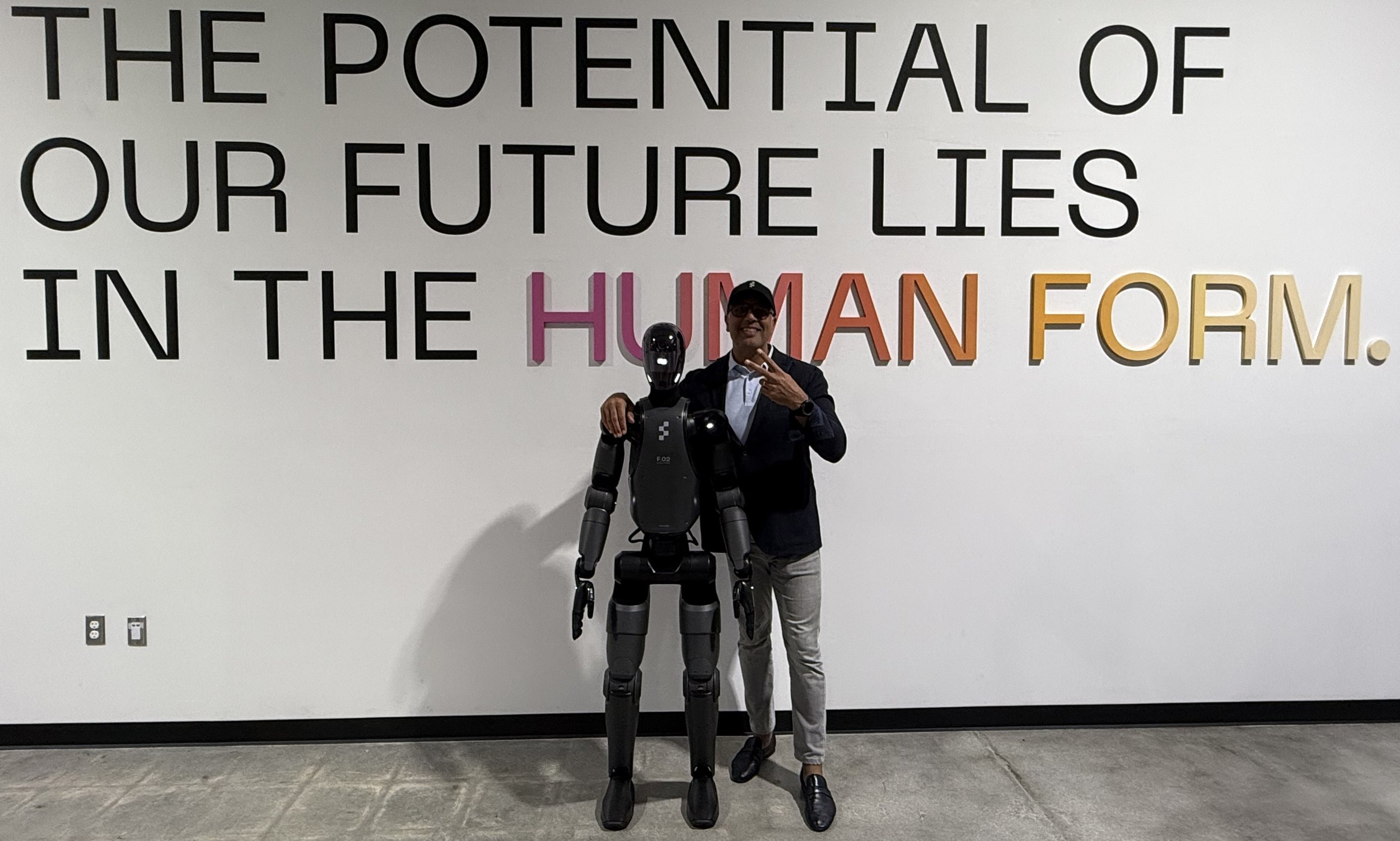

Fast forward 28 years, and I'm sitting in my office in South Florida an investor in companies like Figure AI (humanoid robots that learn in real time), SandboxAQ (quantum AI that's converging large language models with large quantitative models), and Scout AI (a company pushing the boundaries of intelligent systems). And I keep thinking about that summer at Cape Canaveral.

Because what I learned at NASA in 1997 isn't just a fun origin story. It's a lens for understanding something that most people in the technology world are getting wrong right now: the timeline.

What AI Looked Like in 1997

Let me paint you a picture of the state of the art in artificial intelligence when I was interning at NASA.

The internet was still a novelty. Google wouldn't be founded for another year. The Human Genome Project was nowhere near complete. The most powerful computer most people had access to was a Pentium II running Windows 95, and you were lucky if it didn't crash twice a day.

In the lab, "artificial intelligence" meant rule based systems. Expert systems. Programs that could follow logic trees but couldn't learn from experience in any meaningful way. The idea of a machine that could hold a conversation, write an essay, generate code, or recognize a face in a photograph was so far from reality that it would have sounded like science fiction.

And yet, even then, there were glimmers. The biological pattern recognition work we were doing at NASA hinted at something bigger. If you could train a computer to spot anomalies in plant growth data under microgravity conditions (which is essentially what we were working on), then theoretically, you could train it to spot anomalies in anything: medical imaging, financial markets, manufacturing defects, human behavior.

The capability was there in embryonic form. What was missing was compute power, data, and about two decades of algorithmic breakthroughs.

The 28-Year Gap and What It Reveals

Here's what keeps me up at night (in a good way): the gap between what I saw at NASA in 1997 and what I see in my portfolio companies in 2026 is not just large. It's nonlinear. The progress didn't happen in a straight line. It happened in fits and starts, with long periods of seeming stagnation followed by explosive breakthroughs.

From 1997 to roughly 2012, AI progress was glacial by today's standards. Better algorithms, more data, faster processors, but nothing that would have made a non-specialist sit up and take notice. Then deep learning took off. Then GPT models happened. Then multimodal AI. Then humanoid robotics. Then quantum AI convergence.

In the last four years alone, we've seen more transformative AI progress than in the previous forty. And this is the part that most VCs, most founders, and most policymakers are struggling to internalize: the rate of change is accelerating, not just continuing.

When I was at NASA, if you'd told me that within my lifetime we'd have machines that could reason, create art, write code, diagnose diseases, and operate physical bodies in real world environments, I would have said "maybe, in a hundred years." It took 28. I

Why Most People Get the Timeline Wrong

Investors, in particular, are terrible at understanding technology timelines. And I include myself in that criticism. We tend to oscillate between two extremes: either we believe something is imminent (and invest too aggressively too early) or we believe it's decades away (and miss the inflection point entirely).

I've made both mistakes. At Offyx, my first startup in 1999, we built a product that was technically sound but about five years too early for the market. The technology wasn't ready, the infrastructure wasn't there, and the users weren't yet comfortable with what we were offering. We were right about the direction. We were wrong about the timing. And in startups, being early is functionally identical to being wrong.

On the other hand, I've also been guilty of underestimating timelines. When I first heard about Bitcoin and blockchain technology, I dismissed it as a niche curiosity. By the time I invested in Kraken, the opportunity was still enormous, but I could have been much earlier if I'd taken the timeline more seriously.

What my NASA experience taught me, and what I've been refining ever since, is a framework for thinking about technology timelines that I call "the seed and the soil." The seed is the core technological insight. The soil is the ecosystem of infrastructure, capital, talent, and market readiness that allows that insight to grow. In 1997 at NASA, the seed of modern AI was planted. But the soil wasn't ready. It would take another two decades of Moore's Law, internet proliferation, data accumulation, and algorithm development for that seed to germinate.

When I evaluate investments today, I'm always asking: is the seed real? And is the soil ready? If both answers are yes, I want to be in. If the seed is real but the soil isn't ready, I'm cautious. And if I can't tell whether the seed is real, I do more research before committing.

What I'm Seeing Now That Reminds Me of That Summer

Here's what's exciting: right now, in 2026, I'm seeing the same kind of embryonic convergence that I saw at NASA in 1997, but at a completely different scale.

At SandboxAQ, a Neman Ventures portfolio company, the convergence of large language models and large quantitative models (what Jack Hidary calls LLMs + LQMs) is creating AI systems that can handle both natural language and mathematical reasoning. This is the computational biology problem I worked on at NASA, scaled up by a factor of a billion. The pattern recognition capabilities that seemed miraculous in a 1997 lab are now table stakes for a 2026 AI company.

At Figure AI, a Neman Ventures portfolio company, humanoid robots are learning to navigate physical environments through a combination of computer vision, reinforcement learning, and real time adaptation. In 1997, the idea of a robot that could "see" was a PhD thesis. In 2026, Figure is building robots that can see, reason, manipulate objects, and improve their performance autonomously.

A recent Morgan Stanley research note suggested that AI infrastructure could face a meaningful power shortfall in the years ahead. That's not a problem that exists in a world where AI is a niche technology. That's a problem that exists in a world where AI is becoming the operating system of civilization. And that transition, from niche to ubiquitous, is happening faster than almost anyone predicted.

The Premed Kid Who Chose the Right Wrong Path

Looking back, I think the most important thing that happened to me at NASA wasn't the research itself. It was the realization that I was on the wrong career path for the right reasons.

I went to NASA as a premed student. I left understanding that my real passion was at the intersection of technology and human experience. I didn't have the language for it at the time, and it would take me several more years, a miserable stint in medical school, and the crushing failure of my first startup before I figured out how to channel that passion productively. But the seed was planted that summer.

When I eventually built JoonBug, I was applying technology to human experience (nightlife, social connection, entertainment). When I built EZ Texting, I was applying technology to human communication. And now, as a venture capitalist backing companies in AI, robotics, quantum, and longevity, I'm still doing the same thing: finding the places where transformative technology meets fundamental human need.

That through-line goes all the way back to a lab at Kennedy Space Center where a 20 year old kid was trying to get a computer to recognize patterns in plant growth data. The tools have changed. The scale has changed. But the question is the same: how do we use technology to understand and enhance human life?

It just took 28 years for the soil to catch up with the seed.

And if the last 28 years are any indication, I believe the next 28 could be remarkable.

Disclosures

Shane Neman is the Manager of Neman Ventures LLC, an exempt investment adviser registered with the U.S. Securities and Exchange Commission (CRD# 330770). The views expressed in this post are personal and reflect the author’s opinions as of the date of publication. They do not constitute investment advice, an offer to sell, or a solicitation of an offer to buy any security or interest in any fund managed by Neman Ventures LLC or its affiliates.

Companies referenced in this post are illustrative examples selected to support the themes discussed and do not represent the entire Neman Ventures portfolio. They were not selected on the basis of performance. References to specific portfolio companies should not be interpreted as recommendations to buy, sell, or hold any security. Investing in private, early stage companies involves substantial risk, including illiquidity and the potential loss of the entire investment. Past investment activity is not indicative of future results. Forward looking statements reflect the author’s personal views and are subject to known and unknown risks; actual outcomes may differ materially.